On September 24, Alibaba Group introduced a major breakthrough in artificial intelligence: Qwen3-Max, the most advanced and capable model in its latest AI lineup. This launch signals Alibaba’s growing ambition in the global AI race and offers powerful new tools for developers, researchers, and businesses.

In this article, we’ll explore what makes this new model stand out, its technical strengths, and how it fits within Alibaba’s broader model family.

Understanding Qwen3-max and Its Series

Qwen3 is the latest generation of large language models (LLMs) developed by Alibaba Cloud’s Tongyi Lab. It builds on the success of earlier Qwen models and includes several versions designed for different use cases—from lightweight tasks to heavy-duty reasoning.

Qwen3-Max is the flagship model in this series. Think of it as the “premium” version—built for complex, high-stakes tasks that require deep understanding, long reasoning chains, and top-level accuracy. While other Qwen3 models are great for everyday applications, Qwen3-Max is made for the toughest challenges.

Alibaba designed the newest AI model to handle everything from advanced coding and scientific research to multi-step business analysis. It’s not just bigger—it’s smarter, faster, and more reliable in demanding scenarios.

Massive Scale, Smarter Design

According to Alibaba’s official information, Qwen3-Max has over 1 trillion parameters, which is a huge jump compared to other models in the Qwen3 series. For example, the Qwen3-72B model has 72 billion parameters, meaning Qwen3-Max’s parameter count is more than 13 times that of Qwen3-72B. This large parameter scale gives Qwen3-Max a stronger ability to learn and understand complex information.

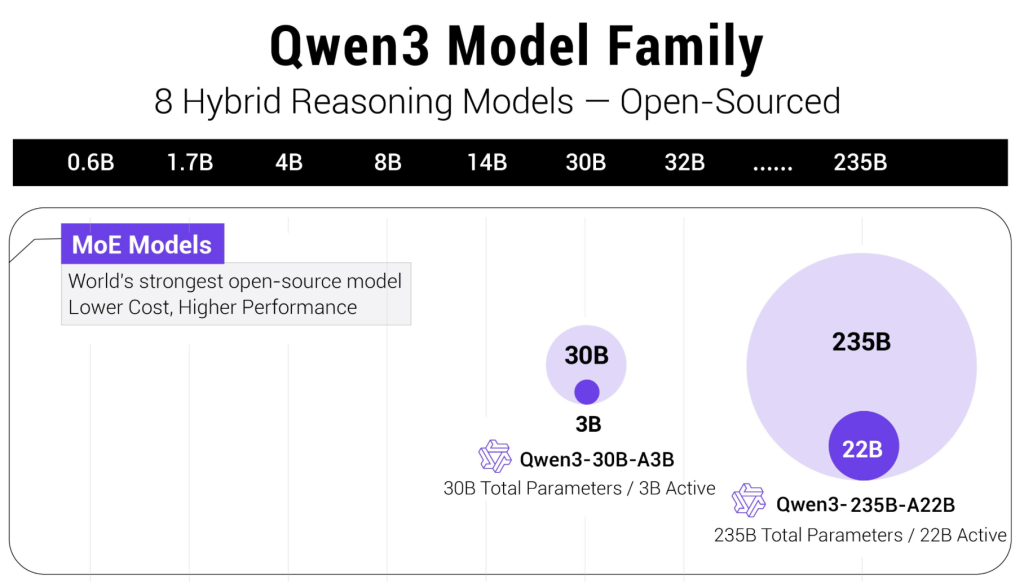

Qwen3-Max also uses an MoE (Mixture of Experts) architecture, which helps improve efficiency despite its large scale. This architecture divides the model into many small “expert” modules and only activates the relevant modules for each task. This means that it doesn’t need to use all 1 trillion parameters for every task, reducing computational costs and improving speed. Let me see, how about the Qwen3 model family capabilities

Compared to traditional models with the same parameter scale, its training efficiency is 30% higher, according to Alibaba.

Advanced Reasoning Capabilities

One of the standout features of this model is its ability to reason step by step. Instead of generating quick guesses, it constructs logical chains to arrive at well-supported conclusions—a technique known as chain-of-thought reasoning.

For instance, when solving a complex math problem or debugging code, it doesn’t just provide an answer. It explains each step, checks for inconsistencies, and adapts based on feedback. This makes it especially valuable in education, software development, and technical consulting.

It also maintains strong performance in:

- Long, multi-turn conversations with consistent context tracking

- Generating and explaining code across multiple programming languages

- Analyzing documents that span thousands of words

- Drawing connections across diverse knowledge domains

These abilities stem from both its architecture and the way it’s trained—prioritizing depth over speed where it matters most.

Key Strengths That Set It Apart

Several features make the flagship model a compelling choice for advanced applications:

- Top-tier performance: It leads the series in benchmark tests for reasoning, coding, and language understanding.

- Full-context utilization: Unlike smaller variants, it can use the entire input window for both reasoning and output.

- Enterprise integration: It’s optimized for secure, scalable deployment through Alibaba Cloud.

- Multilingual fluency: Strong in Chinese and highly capable in English, Spanish, French, and other major languages.

- Balanced efficiency: Despite its size, inference is accelerated through hardware-aware optimizations.

These advantages make it well-suited for building intelligent assistants, research tools, or data analysis platforms that require reliability and depth.

Model Comparison: Choosing the Right Fit

Not every use case requires the full power of the flagship. Alibaba offers a range of models to match different needs. The table below highlights key technical differences across the series:

| 0.5B | Lightweight | 32,768 tokens | 32,768 | 8,192 tokens | 8,192 tokens |

| 1.8B | Balanced | 32,768 tokens | 32,768 | 12,288 tokens | 12,288 tokens |

| 4B | General | 32,768 tokens | 32,768 | 16,384 tokens | 16,384 tokens |

| 7B | Advanced | 32,768 tokens | 32,768 | 24,576 tokens | 24,576 tokens |

| 14B | High-End | 32,768 tokens | 32,768 | 28,672 tokens | 28,672 tokens |

| Max | Flagship | 32,768 tokens | 32,768 | 32,768 tokens | 32,768 tokens |

What this means:

The flagship model is the only one that can dedicate the full 32,768-token window to internal reasoning and output. Others must split this space between input and response, limiting their ability to handle deeply layered tasks. For applications requiring long, structured thinking—like summarizing a 50-page report or planning a multi-phase project—this full utilization is a game-changer.

Who Should Use the Flagship Model?

This top-tier model is best for:

- Enterprises building mission-critical AI systems (e.g., customer intelligence, risk analysis)

- Researchers tackling open-ended or interdisciplinary problems

- Developers creating tools that demand high reliability and depth

- Content professionals needing detailed, well-reasoned drafts from long source material

For simpler tasks—like basic chatbots, short-form content, or real-time translation—smaller models in the series offer better cost and speed efficiency.

Usage Instructions: Qwen3-Max

Alibaba Cloud: Baolian

Looking Ahead

Alibaba’s September 24 launch marks a significant milestone in its AI journey. By delivering a model that combines scale, intelligence, and practicality, the company is positioning itself as a serious contender in the global foundation model space.

As AI continues to evolve, the focus is shifting from raw size to smart design and real-world impact. The new flagship embodies this shift—proving that the future of AI isn’t just about being big, but about being thoughtful, accurate, and useful.

Whether you’re exploring AI for business or research, this latest release offers powerful new possibilities worth watching closely.

If you’re particularly interested in AI tools, we can also explore, such as DeepSeek vs. ChatGPT.